What makes impersonation scams effective?

What makes impersonation scams work: spoofed sites and identities, AI voice and video, and scale.

How do scammers fake websites, apps, and identities online?

Domain and digital impersonation refers to the technical layer that makes scam identities look real online. Instead of impersonating a person directly, the criminal impersonates the websites, apps, phone numbers, message threads, and digital infrastructure people rely on to decide what is legitimate.

Every impersonation category in this guide depends on digital infrastructure. The lie still needs a delivery system. That is where domain spoofing, app clones, phishing kits, and social spoofing come in.

The most common digital impersonation techniques include:

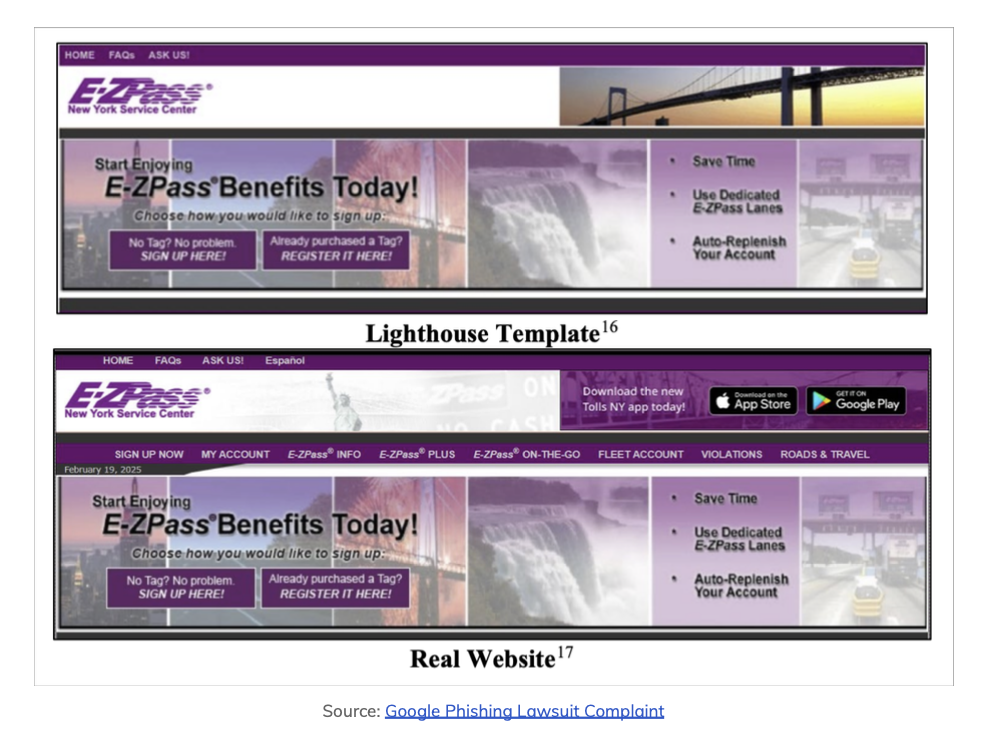

Spoofed websites and typosquatting: Chainalysis reported that scam operators are now able to buy out-of-the-box phishing kits, one of which facilitated a massive E-ZPass scam. The screenshot below shows how close a fake site can look to the real one. The scam generated $1B in revenue over 3 years, affecting 1 million people in 121 countries.

Fake mobile apps: Criminals copy the visual design of exchange or wallet apps to steal credentials and private keys.

Caller ID and SMS spoofing: Scammers inject fake messages into the same thread as legitimate bank texts, making the fraud feel pre-verified.

Modern impersonation is not always handcrafted. It is often assembled from ready-made infrastructure. That makes these scams faster to launch, easier to localize, and cheaper to scale.

How is AI changing impersonation scams?

AI has changed impersonation scams in recent years. The criminal no longer needs to rely on bad acting, awkward email grammar, or stolen photos that can be reverse image searched. They can now generate convincing identities, voices, scripts, and live interactions on demand using generative AI.

Tools like ChatGPT, Gemini and Claude can easily facilitate many of these capabilities, but scam-specific AI services are also available. These allow for more nefarious tactics that are banned under the mainstream models' Terms of Use.

The economic incentive is significant. Chainalysis reported that AI-enabled scams were 4.5 times more profitable than traditional scams in 2025.

Voice cloning

Voice cloning is now cheap, fast, and more accurate. Deepstrike reported that a convincing clone can be created from three seconds of audio, and some examples have reportedly cost less than a dollar to generate. In a video-dominated internet, this means that scammers can easily pull voice data from a short clip on social media.

Deepfake video

Deepfake video allows anyone with enough instances of a person's likeness to recreate their image and voice live onscreen. The fake video will follow the impersonator's facial movements and gestures exactly, while replacing their voice with a convincing facsimile of the stolen identity's.

Keepnet Labs reported that a deepfake attempt occurred every five minutes in 2024 and that deepfake incidents rose 257% from 2023 to 2024. This advance in scam technology is essential to understand because a live video call used to be a reasonable form of identification, but now requires cross-referencing with multiple other forms of verification.

AI-generated text and personas

Text-based impersonation has also improved. America's Credit Unions data shows that 82.6% of analyzed phishing emails between September 2024 and February 2025 showed signs of AI use. AI also allows scammers to maintain multiple natural-sounding conversations at once, eliminate grammar errors, localize slang, and personalize scripts around a target's social media footprint.

| Attack vector | Traditional scam | AI-enabled scam |

|---|---|---|

| Voice | Obviously bad English, rigid script | Realistic cloned voice with live responses |

| Video | Avoided or pre-recorded | Deepfake video chat |

| Text | Generic template, broken grammar | Personalized, polished, context-aware outreach |

| Scale | One operator managing a few victims | Automated support for dozens of victims |

| Profitability | Baseline | 4.5x more profitable |

FAQ

- Scammers use AI for voice cloning, deepfake video, and AI-generated text to impersonate trusted people or institutions. Voice clones can be made from seconds of audio; deepfakes can mimic a CEO or family member on a video call; and AI-polished phishing and support chats remove the grammar and spelling tells that used to expose fakes. The result is that impersonation is harder to spot and more profitable for criminals.

- They use domain spoofing and typosquatting, fake mobile apps that copy real exchange or wallet designs, phishing kits sold as ready-made products, and caller ID or SMS spoofing so messages appear in the same thread as real bank or brand communications. Much of this infrastructure is bought off the shelf, which makes scams faster to launch and easier to scale.

- Deepfake video uses AI to map someone's face and voice onto another person's movements in real time. Scammers use it to appear as a trusted contact, executive, or celebrity on a video call. A live video call used to be a reasonable way to verify identity; now it can be faked. The best defense is to verify through multiple channels and to treat pressure to act before you can verify as a red flag.